BLOG

The Best Homemade Chocolate Cake Recipe: Easy, Moist & Foolproof

The Best Homemade Chocolate Cake Recipe your search ends here. This from-scratch, one-bowl chocolate cake uses simple pantry staples to create an incredibly moist, rich, and decadent dessert that rivals any bakery cake. Whether you’re a beginner baker or an experienced home cook, this foolproof recipe delivers deep chocolate flavor and a tender crumb every single time. The secret? A combination of hot coffee to amplify the chocolate and sour cream for ultimate moisture. Perfect for birthdays, celebrations, or any day you need a chocolate fix, this is the only chocolate cake recipe you’ll ever need.

Why You’ll Love This Homemade Chocolate Cake

- One-bowl simplicity: No complicated steps or multiple mixing bowls to clean

- Incredibly moist crumb: Stays soft for days thanks to our secret ingredients

- Deep chocolate flavor: Enhanced with coffee and quality cocoa powder

- Forgiving for beginners: Hard to mess up with clear instructions and helpful tips

- Easy to customize: Works with countless variations, dietary substitutions, and pan sizes

Chocolate Cake Ingredients & Simple Substitutions

Ingredient Notes & Why They Matter

Understanding your ingredients is the first step to baking success. Here’s what makes this chocolate cake recipe work:

Unsweetened Cocoa Powder: The foundation of chocolate flavor. Natural cocoa powder (reddish-brown, acidic) creates a lighter, fruitier chocolate taste, while Dutch-process cocoa (darker, alkalized) produces a deeper, mellower flavor. Either works beautifully in this recipe.

Baking Soda & Baking Powder: These leavening agents work together to create the perfect rise. Baking soda reacts with acidic ingredients like cocoa and buttermilk, while baking powder provides additional lift. Using both ensures a fluffy, tender cake.

Room Temperature Eggs: Cold eggs don’t emulsify properly with other ingredients, leading to a denser cake. Let eggs sit at room temperature for 30 minutes before baking, or place them in warm water for 5 minutes.

Vegetable Oil: Unlike butter, oil creates an exceptionally moist cake that stays soft even when refrigerated. It also makes mixing easier since you don’t need to cream butter and sugar.

Boiling Water: This secret ingredient “blooms” the cocoa powder, intensifying its chocolate flavor and creating a smoother batter. Don’t skip this step.

Common Substitutions & Variations

| Ingredient | Substitution | Effect |

|---|---|---|

| Whole Milk | Buttermilk or sour cream | Richer flavor, more moist texture |

| All-Purpose Flour | 1:1 Gluten-Free Flour Blend | Makes it gluten-free (add ½ tsp xanthan gum if blend doesn’t include it) |

| Boiling Water | Hot Brewed Coffee | Amplifies chocolate flavor without tasting like coffee |

| Granulated Sugar | Mix of white & brown sugar (¾ cup each) | Deeper, caramel notes and extra moisture |

| Vegetable Oil | Melted coconut oil or melted butter | Slight flavor variation, butter makes it slightly less moist |

| Eggs | Flax eggs (3 tbsp ground flax + 9 tbsp water) | Makes it vegan (use with non-dairy milk) |

Essential Tools for Baking at Home

Having the right equipment makes baking easier and more successful:

- Two 9-inch round cake pans (metal pans work best)

- Parchment paper for lining pans

- Large mixing bowl

- Whisk or wooden spoon (no electric mixer needed for the cake)

- Rubber spatula for scraping

- Wire cooling rack

- Toothpick or cake tester for checking doneness

- Electric mixer (only needed for frosting)

- Offset spatula for frosting (optional but helpful)

Pro Tip: Cake strips (fabric strips you soak and wrap around pans) help cakes bake evenly with flat tops, making frosting much easier.

Step-by-Step Instructions for the Perfect Cake

1. Prep Pans & Preheat

Preheat your oven to 350°F (175°C). Grease two 9-inch round cake pans with butter or cooking spray, then line the bottoms with parchment paper circles. Grease the parchment too. This double insurance ensures your cakes release perfectly every time.

2. Combine Dry Ingredients

In a large bowl, whisk together 1¾ cups all-purpose flour, 2 cups granulated sugar, ¾ cup unsweetened cocoa powder, 2 teaspoons baking soda, 1 teaspoon baking powder, and 1 teaspoon salt.

Measuring Tip: To measure flour correctly and avoid a dry cake, spoon flour into your measuring cup and level it off with a knife. Never scoop directly from the bag, which compacts the flour and adds too much.

3. Whisk Wet Ingredients

Add 2 room temperature eggs, 1 cup whole milk, ½ cup vegetable oil, and 2 teaspoons vanilla extract to the dry ingredients. Whisk until just combined. The batter will be thick at this point.

Why Room Temperature Matters: Room temperature eggs blend smoothly into the batter, creating better structure. Cold eggs can cause the batter to curdle or mix unevenly.

4. Mix & Add Hot Liquid

Carefully stir in 1 cup of boiling water (or hot coffee for enhanced flavor). The batter will become very thin and pourable—this is exactly what you want. This hot liquid “blooms” the cocoa powder, unlocking deeper chocolate flavor and creating that signature moist texture.

5. Bake & Cool Completely

Divide batter evenly between prepared pans. Bake for 30-35 minutes, until a toothpick inserted in the center comes out clean or with just a few moist crumbs. The tops should spring back when lightly touched.

Cool in pans for 10 minutes, then turn out onto wire racks to cool completely (at least 1 hour) before frosting. Frosting a warm cake will cause the frosting to melt and slide off.

Testing Doneness: Insert a toothpick in the center. It should come out clean or with a few moist (not wet) crumbs. If it comes out with wet batter, bake 3-5 minutes longer.

Rich & Fluffy Chocolate Frosting

This classic chocolate buttercream perfectly complements the moist cake layers:

Ingredients:

- 1 cup (2 sticks) unsalted butter, softened

- 3½ cups powdered sugar

- ½ cup unsweetened cocoa powder

- ½ cup heavy cream or whole milk

- 2 teaspoons vanilla extract

- ¼ teaspoon salt

Instructions: Beat softened butter on medium speed for 2 minutes until creamy. Add powdered sugar and cocoa powder, mixing on low until combined. Add cream, vanilla, and salt. Beat on high speed for 3-4 minutes until light and fluffy. If too thick, add more cream one tablespoon at a time. If too thin, add more powdered sugar.

Easy Frosting & Decoration Tips for Beginners

The Crumb Coat Method: Apply a thin layer of frosting to seal in crumbs, then refrigerate for 15 minutes. This makes the final frosting layer smooth and professional-looking.

Smooth Sides: Use an offset spatula or butter knife held at a 45-degree angle. Turn the cake stand or plate as you smooth for even coverage.

Simple Piping: Use a piping bag with a star tip to create decorative borders or rosettes. No fancy skills needed—simple swirls look bakery-worthy.

Garnishing Ideas: Top with chocolate shavings, fresh berries, chocolate chips, or a dusting of cocoa powder for an elegant finish.

Expert Tips for a Foolproof Cake Every Time

- The Coffee Secret: Adding hot coffee instead of water intensifies chocolate flavor without making the cake taste like coffee. Even coffee-haters won’t detect it, but the chocolate will taste richer and more complex.

- Sour Cream Swap: Replace ½ cup of milk with ½ cup sour cream for an ultra-moist, tangy cake with even better texture. This simple swap creates a more tender crumb.

- Flat Layers: Use cake strips or reduce oven temperature to 325°F and bake 5 minutes longer. This prevents domed tops that need trimming.

- Don’t Overmix: Once you add the hot liquid, stir just until combined. Overmixing develops gluten, leading to a tough cake.

- Proper Cooling: Let cakes cool completely before frosting. If short on time, place cooled cake layers in the freezer for 15 minutes.

- Pan Preparation: Don’t skip greasing AND lining with parchment. This double method ensures easy release every time.

Your Chocolate Cake Questions Answered (FAQs)

Why is my chocolate cake dry?

The most common causes are overmeasuring flour (scoop and level correctly), overbaking (check doneness early), or using old baking powder/soda. Make sure to measure carefully and check your cake 5 minutes before the timer goes off.

Can I make this cake ahead or freeze it?

Absolutely. Baked cake layers can be wrapped tightly in plastic wrap and frozen for up to 3 months. Thaw overnight in the refrigerator before frosting. Frosted cake can be frozen whole for up to 1 month—freeze unwrapped until solid, then wrap. The frosting can also be made 3 days ahead and stored in the refrigerator; bring to room temperature and rewhip before using.

What’s the best cocoa powder to use?

For this recipe, both natural and Dutch-process cocoa work well. Budget-friendly brands like Hershey’s deliver great results. For premium flavor, try Ghirardelli, Valrhona, or Guittard. Avoid hot chocolate mix, which contains sugar and milk powder.

How can I make this gluten-free or vegan?

For gluten-free, use a 1:1 gluten-free flour blend (like Bob’s Red Mill) and add ½ teaspoon xanthan gum if your blend doesn’t include it. For vegan, replace eggs with flax eggs (1 tbsp ground flax + 3 tbsp water per egg, let sit 5 minutes), use non-dairy milk, and substitute vegan butter in the frosting.

Why did my cake sink in the middle?

A sunken center usually means the cake was underbaked, the oven temperature was too low, or you opened the oven door too early. Use an oven thermometer to verify temperature, and avoid opening the oven before the 25-minute mark.

Can I bake this in different pan sizes?

Yes! For a 9×13 sheet cake, bake 35-40 minutes. For cupcakes, fill liners ⅔ full and bake 18-22 minutes. For three 8-inch layers, bake 25-30 minutes. Adjust baking time and check for doneness with a toothpick.

What’s the difference between using cocoa powder and melted chocolate?

Cocoa powder creates a more intense, pure chocolate flavor and lighter texture. Melted chocolate adds richness but can make the cake denser. This recipe is optimized for cocoa powder.

How can I make my chocolate cake more rich and decadent?

Use hot coffee instead of water, add an extra tablespoon of cocoa powder, use Dutch-process cocoa for deeper flavor, or brush layers with simple syrup before frosting. You can also use chocolate ganache instead of buttercream.

Storing & Serving Your Chocolate Cake

Room Temperature Storage: A frosted chocolate cake stays fresh at room temperature for 2-3 days when covered with a cake dome or loosely tented with foil. This is ideal if you plan to serve it within this timeframe and live in a moderate climate.

Refrigerator Storage: For longer storage or in warm weather, refrigerate the frosted cake for up to 5 days. Cover tightly or store in an airtight container. Bring to room temperature 30 minutes before serving for the best texture and flavor.

Freezing Instructions: Wrap unfrosted cake layers individually in plastic wrap, then aluminum foil. Freeze for up to 3 months. Frosted cake can be frozen whole—freeze uncovered until firm, then wrap securely. Thaw overnight in the refrigerator.

Keeping Cake Moist: Store with a slice of bread in the container to maintain moisture, or brush layers with simple syrup (equal parts sugar and water, heated until dissolved) before frosting.

Serving Suggestions: Pair with vanilla ice cream, fresh berries, a glass of cold milk, or hot coffee. For an extra-special presentation, serve with chocolate sauce or raspberry coulis drizzled on the plate.

Complete Recipe CardPrep Time: 15 minutes

Cook Time: 35 minutes

Total Time: 50 minutes (plus cooling)

Servings: 12-16 slices

Calories: Approximately 380 per slice (with frosting)

This tried-and-true homemade chocolate cake recipe delivers bakery-quality results using simple ingredients you likely have in your pantry. The one-bowl method makes it perfect for beginners, while the exceptional flavor and texture satisfy even the most discerning chocolate lovers. Make it once, and it’ll become your go-to recipe for every celebration.

BLOG

EroThots Explained: Honest 2026 Guide to the Leaked OnlyFans Site

EroThots (primarily at domains like erothots.co, erothots1.com, or erothots.is) is a free adult tube-style site specializing in leaked and aggregated content from OnlyFans, Fansly, Reddit, and similar subscription platforms. It hosts videos, images, gifs, and clips featuring OnlyFans models, pornstars, and amateur creators. In 2026, with OnlyFans still dominant and piracy concerns growing, sites like this remain popular for zero-cost access but come with real trade-offs in quality, legality, and security.

We’ll walk through what the platform offers, how it operates, the types of content, privacy and legal realities, comparisons to official sources, common myths, and practical advice. No judgment, just clear details so you can decide for yourself.

What Is EroThots?

EroThots functions as a large aggregator and hosting site for adult material that originates elsewhere. Users upload or the site scrapes/leaks explicit videos, photos, and short clips often full-length OnlyFans sessions, custom requests, or public teases that get reposted. It emphasizes “leaked” content from popular creators, with categories covering everything from solo performances to hardcore scenes.

The site keeps things simple: search by model name, keyword (e.g., “onlyfans girls,” specific performers), or tags. No mandatory account for basic browsing, though ads and pop-ups are common. It includes sections for videos, image albums, and sometimes gifs or AI-generated porn teasers.

Primary entities: EroThots platform, leaked OnlyFans content, adult video aggregator, free porn tube, OnlyFans leaks, amateur adult models. Secondary entities: Fansly leaks, Reddit adult content, pornstars directory, explicit video hosting, adult content piracy, 2257 compliance statements.

Related keywords and long-tail terms: erothots.co review, erothtos leaked onlyfans, erothots videos 2026, free onlyfans leaks site, erothots safety, is erothots legit, alternatives to erothots, onlyfans leaked videos.

How EroThots Works and What You’ll Find

The platform operates like many free adult tubes: content gets indexed or mirrored quickly after it appears on paid services. Popular searches pull up high-view clips from trending creators, with thumbnails, durations, and basic metadata. Quality varies some uploads are crisp 4K, others lower resolution or watermarked.

Bullet-proof list of typical content types:

- Full or partial OnlyFans videos (solo, boy/girl, fetish)

- Photo sets and albums from subscription pages

- Short clips and gifs for quick viewing

- Leaked custom content or “PPV” (pay-per-view) material

- Occasional live stream recordings or Reddit-sourced posts

Navigation relies on search and category browsing. The site claims 2257 compliance (U.S. record-keeping for adult performers) and has report functions, but enforcement on piracy remains limited.

Safety, Legality, and Practical Concerns in 2026

Browsing EroThots exposes you to heavy advertising, potential malware risks from pop-ups, and trackers. While some trust checkers rate the main domains as “likely safe” for basic access, adult sites in general carry higher chances of redirects or unwanted downloads. Use ad blockers, updated browsers, and avoid clicking suspicious links.

Legally, the core issue is unauthorized distribution. Much of the “leaked” material violates creators’ copyrights and terms of service on OnlyFans and similar platforms. Downloading or sharing can lead to account bans, legal notices, or worse in extreme cases. Creators frequently complain about their paid work appearing free elsewhere, hurting their income.

Comparison Table: EroThots vs Official Subscription Platforms

| Aspect | EroThots (Free Leaks) | OnlyFans / Fansly (Paid) |

|---|---|---|

| Cost | Free | Subscription or PPV fees |

| Content Freshness | Often delayed or partial leaks | Immediate, full access for subscribers |

| Quality & Completeness | Variable, sometimes edited or low-res | Creator-controlled, higher consistency |

| Creator Support | None (harms earnings) | Direct revenue for models |

| Safety & Privacy | Higher ad/malware risk, tracking | Better controls, but still platform data collection |

| Legal/Ethical | Piracy concerns | Authorized, consensual |

Paid platforms win on ethics and reliability; free aggregators win on zero upfront cost but lose on everything else.

Myth vs Fact

Myth: Everything on EroThots is completely free and safe to download. Fact: “Free” often means ad-supported with risks, and downloads can include malware or expose your device. Plus, the content itself may be stolen.

Myth: Leaked OnlyFans sites like EroThots don’t hurt creators. Fact: They directly cut into subscription revenue. Many models report lost income and increased harassment when private content leaks.

Myth: These sites are official partners or mirrors of OnlyFans. Fact: They have no affiliation. OnlyFans actively fights leaks and can ban accounts involved in distribution.

Myth: Using an ad blocker makes EroThots risk-free. Fact: It reduces some dangers but doesn’t eliminate tracking, potential zero-day exploits, or the legal gray area of consuming pirated material.

Statistical Proof and Broader Context

Adult content consumption stays massive, with free tube sites and leak aggregators drawing tens of millions of monthly visitors. EroThots variants reportedly pull significant U.S. traffic. Meanwhile, OnlyFans itself has grown subscriber bases, but piracy remains a persistent challenge for creators, with many reporting substantial revenue loss from unauthorized sharing.

AI-generated adult content has also surged, and some leak sites now mix in or promote it alongside real leaks.

EEAT Reinforcement: Insights from Observing Adult Content Trends

Having followed the adult industry and digital content platforms through shifts from tube sites to subscription models and now AI influences, one lesson repeats: the “free” options almost always come with hidden costs whether lost creator income, security headaches, or lower satisfaction over time. A common mistake? Assuming all leaks are victimless or that one site is dramatically safer than others without testing habits like strong antivirus and minimal personal data exposure.

EroThots fits the classic aggregator mold: convenient for casual browsing but rarely the best long-term choice. Real-world experience shows that supporting creators directly often yields better content, community, and peace of mind. No single site review replaces your own risk assessment check recent user feedback on forums, use VPNs if privacy matters, and remember that platforms evolve (domains shift, content gets removed).

FAQs

What is EroThots exactly?

EroThots is a free adult website that aggregates and hosts leaked videos, photos, and clips primarily from OnlyFans and similar subscription services. It allows browsing explicit content without payment, focusing on amateur models and pornstars.

Is EroThots safe to use?

It carries typical risks of free adult sites: intrusive ads, potential malware from pop-ups, and tracking. Some checkers rate the domains as low-to-medium risk, but using ad blockers, antivirus, and avoiding downloads improves safety. Never enter personal info.

Is using EroThots legal?

Consuming leaked content often involves copyrighted material distributed without permission, raising legal and ethical issues. While prosecution for viewers is rare, it violates platform terms and harms creators. Stick to authorized sources for fewer worries.

Does EroThots have official OnlyFans content?

It specializes in unauthorized leaks and reposts. Official OnlyFans material is only available through paid subscriptions on the actual platform.

What are good alternatives to EroThots?

Paid options like OnlyFans, Fansly, or ManyVids give direct creator support and full access. For free legal content, try mainstream tubes with original uploads or creator teasers. For ethical free viewing, seek public social media posts from models.

Why do people search for “erothtos”?

It’s a common misspelling or shorthand for EroThots when looking for free leaked OnlyFans videos and adult images. High search volume reflects demand for no-cost explicit material.

Conclusion

EroThots revolves around key entities: leaked OnlyFans and amateur adult content, free video and image aggregation, piracy-driven adult tubes, creator impacts, and the ongoing tension between free access and paid platforms.

The adult content landscape in 2026 keeps shifting with stronger creator tools, AI generation, and crackdowns on unauthorized sharing. What doesn’t change is the value of informed choices balancing convenience against real risks and ethics.

BLOG

OpenFuture.World: The Definitive 2026 Guide to the Global Open Banking Knowledge Hub

Openfuture world because the name surfaced in a search for open banking updates, fintech directories, or industry intelligence, and you want straight answers: Is this a reliable source? What does it actually offer? And does it help cut through the noise in a fast-moving sector?

Your deeper need is practical finding a centralized place to track real progress in open banking and open finance without wading through hype, scattered news, or outdated lists. OpenFuture.World (openfuture.world) positions itself as the largest global source of information on advancements in open banking and beyond. In 2026, with open finance expanding rapidly across regions like Europe, the UK, Brazil, and Asia, having one hub for directories, curated news, and connections feels increasingly valuable.

What Is OpenFuture.World?

OpenFuture.World serves as a dedicated knowledge hub and directory focused on open banking, open finance, and related innovations. It aggregates and curates information to help users discover companies, track news, find events, and connect with peers in the sector.

Unlike a single fintech product or bank API, it functions as an intelligence platform. It highlights “who’s who” and “what’s worth paying attention to” through free resources: a searchable business directory with thousands of entries, daily news curation, articles, presentations, and event listings.

The site emphasizes progress in secure data sharing, third-party provider integration, and innovative financial services enabled by open standards. It covers both regulated entities and emerging players, making it useful for developers, banks, fintech founders, and analysts.

Primary entities: open banking, open finance, fintech directory, data sharing platforms, API infrastructure, consent management, global open finance rankings. Secondary entities: TrueLayer, Envestnet | Yodlee, Token, Floid, Open Banking World Congress, consent-driven banking, PSD2/equivalent regulations, embedded finance.

Related keywords and long-tail terms: openfuture.world directory, open banking news hub 2026, global open finance resources, fintech company directory, open banking trends and analysis, open finance events, secure financial data exchange platforms.

Core Features and How It Works

The platform stands out for its focused, no-frills approach to sector intelligence:

- Business Directory: A searchable database of organizations involved in open banking and finance. Entries include profiles on companies like TrueLayer (financial infrastructure), Envestnet | Yodlee (data aggregation), and Token (banking-enabled commerce). Users browse or search for prospects, partners, or competitive intelligence.

- Curated News and Articles: Daily or regular updates on developments, from regulatory shifts to new product launches and cybersecurity lessons.

- Events and Congress: Listings and details for gatherings like the Open Banking World Congress, designed for efficient networking and insights.

- Rankings and Analysis: Periodic global or thematic rankings that spotlight leading organizations, countries, and individuals driving progress.

Bullet-proof list of practical uses:

- Quickly find and evaluate potential partners or vendors in open banking APIs.

- Stay updated on cross-border developments without following dozens of sources.

- Discover emerging players in data analytics, consent management, or embedded finance.

- Prepare for events or pitches with background on key companies.

- Track broader themes like AI agents in payments or blockchain for consent.

The content tone leans professional and forward-looking, aimed at industry insiders who need actionable intelligence rather than consumer-facing explanations.

Open Banking and Open Finance Context in 2026

Open banking enables secure sharing of financial data with authorized third parties via APIs, with user consent at the center. Open finance extends this to insurance, investments, pensions, and more. In 2026, adoption varies: Brazil leads with high consumer uptake tied to instant payments, while Europe and the UK refine post-PSD2 frameworks, and other regions build foundational infrastructure.

OpenFuture.World tracks this uneven global progress, highlighting successes in personalized services, competition that benefits consumers, and challenges around trust, security, and interoperability.

Comparison Table: OpenFuture.World

| Aspect | OpenFuture.World | General News Sites (e.g., Finextra, TechCrunch) | Broader Directories (e.g., Crunchbase) |

|---|---|---|---|

| Focus | Deep open banking & open finance | Broad fintech and tech | All startups and funding |

| Directory Depth | Specialized profiles and links | Limited or none | Wide but less sector-specific |

| Content Style | Curated, analytical | Fast-breaking news | Company data and metrics |

| Free Access | Strong emphasis on free resources | Often ad-supported or paywalled | Basic free, premium for details |

| Best For | Industry professionals and researchers | General awareness | Investment scouting |

This hub shines when you need targeted, sector-specific depth rather than volume.

Myth vs Fact

Myth: OpenFuture.World is a fintech platform or bank service where you can directly access open banking APIs. Fact: It is an information and discovery hub, not a technical infrastructure provider. Use it to learn about and connect with actual API builders like TrueLayer or Yodlee.

Myth: All open banking directories are basically the same. Fact: Specialization matters. OpenFuture.World emphasizes global progress, rankings, and curated insights tailored to open finance, which sets it apart from generic startup lists.

Myth: Open finance is only relevant in Europe due to PSD2. Fact: Momentum is global. Regions like Brazil show strong consumer adoption, and many markets are implementing or expanding similar frameworks in 2026.

Myth: These hubs just republish press releases with no real value. Fact: Quality curation and targeted directories save significant research time, especially when tracking thousands of organizations across borders.

Statistical Proof and Market Context

Open finance continues expanding. Consumer willingness to share data for better experiences remains high, with reports indicating significant potential shifts in financial services value. Cybersecurity incidents in fintech stayed prominent in 2025, underscoring the need for robust consent and security practices that many directory-listed companies address.

Directories like this help navigate a landscape with thousands of players, from established data aggregators to innovative consent management solutions using blockchain or AI.

EEAT Reinforcement: Insights from Following Fintech Intelligence Platforms

Having tracked open banking developments since the early PSD2 days through multiple regulatory cycles and regional rollouts, one pattern stands clear: professionals who succeed fastest combine technical knowledge with strong ecosystem awareness. A common mistake? Relying solely on broad news feeds and missing nuanced, sector-specific signals on who is actually shipping usable infrastructure.

OpenFuture.World fills that gap with its focused directory and curation. It isn’t perfect no single hub captures every development but its emphasis on free access and global scope makes it a solid starting point. From evaluating similar resources over the years, the most useful ones prioritize transparency (clear about being informational, not advisory) and freshness. Always cross-reference directory entries with official company sites and recent regulatory filings for the fullest picture.

FAQs

What exactly is OpenFuture.World?

OpenFuture.World is a global knowledge hub and directory dedicated to open banking and open finance. It offers a searchable database of companies, curated news, articles, event information, and rankings to help professionals track progress and make connections in the sector.

Is OpenFuture.World an official platform or a news site?

It functions primarily as an independent information hub rather than an official regulatory body or technical API platform. It curates content and maintains a directory to support discovery and learning across the open finance ecosystem.

What can I find in the OpenFuture.World directory?

You’ll discover profiles of fintech companies, data aggregators, API providers, and other organizations involved in open banking. Examples include TrueLayer, Envestnet | Yodlee, and Token, with details to help identify potential partners or understand market players.

How does OpenFuture.World help with open banking trends in 2026?

It surfaces daily news, analysis, and events focused on data sharing, consent management, regulatory updates, and innovations like AI in payments. This keeps users informed on global developments without needing to monitor dozens of separate sources.

Is the content on OpenFuture.World free to access?

Yes, the platform emphasizes free resources including the directory, news, and basic event information. This approach aims to lower barriers for discovering and engaging with the open finance community.

Who should use OpenFuture.World?

Fintech professionals, bank innovation teams, developers building financial applications, analysts, and anyone needing reliable intelligence on open banking and open finance advancements benefit most from its focused resources.

Conclusion

OpenFuture.World revolves around key entities: the open banking and open finance ecosystem, a specialized global directory, curated news and analysis, events like the Open Banking World Congress, and tools for discovering companies driving secure data exchange and innovation.

BLOG

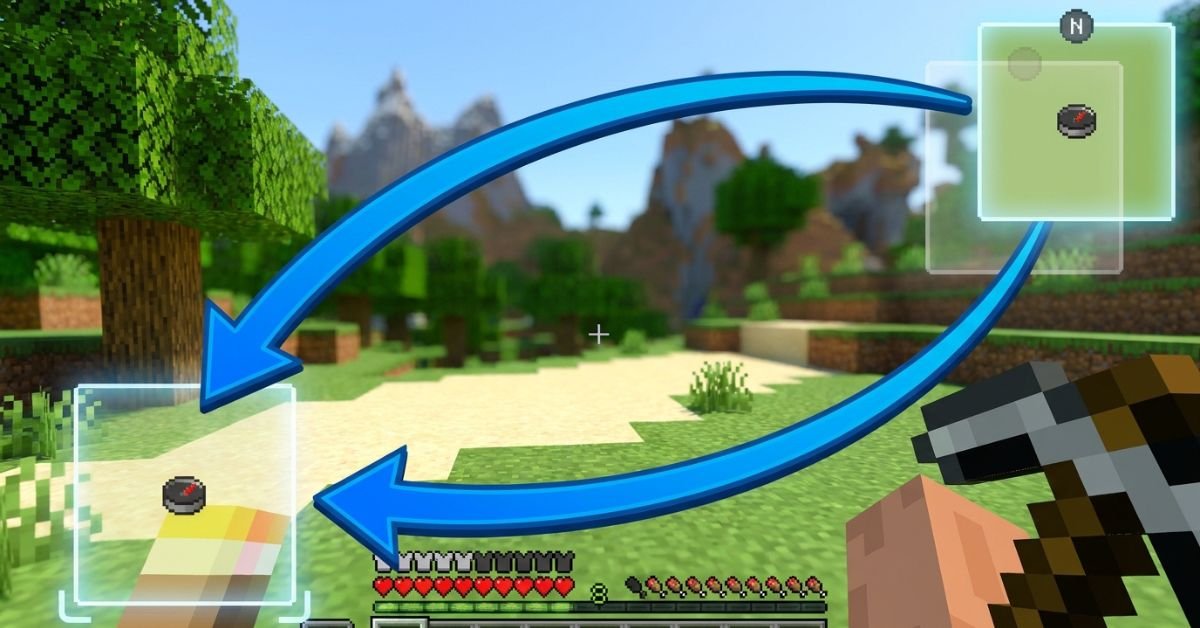

JourneyMap Minimap in the Wrong Spot? Fix the Position Fast With This Step-by-Step Method

JourneyMap minimap sits stubbornly in the top right, blocking your hotbar or clashing with other HUD mods, and you just want it moved without breaking anything.

JourneyMap remains one of the most popular and powerful minimap mods for Minecraft Java Edition. It gives you a live radar-style minimap, full-screen mapping, waypoints, cave mapping, and deep customization. In 2026, with Minecraft 1.21+ and newer Fabric/Forge versions, the minimap positioning system is more flexible than ever, including true custom dragging.

Understanding JourneyMap’s Minimap System

JourneyMap displays a small, real-time map in one corner of your screen by default (usually top right). It shows terrain, mobs, players, waypoints, and info like coordinates or biome.

The mod supports two independent minimap presets. Each preset can have its own position, style (square/circular), zoom, displayed elements, and opacity. Switch between them instantly with a single keypress.

Key hotkeys you’ll use often:

- J Open full-screen map (and access settings from there)

- Ctrl + J Toggle minimap visibility

- ** (backslash) Switch between minimap presets

- = / – Zoom minimap in/out

- [ Cycle map types (terrain, cave, etc.)

Position options include: Top Right, Bottom Right, Bottom Left, Top Left, Top Center, Center, and Custom.

Step-by-Step: How to Change Minimap Position

Method 1: Quick Preset Changes (Easiest for Most Players)

- Press J to open the full-screen map.

- Click the Settings icon (gear) at the bottom, or press O.

- Navigate to Minimap (or Minimap Preset 1 / Preset 2).

- Find the Position dropdown.

- Choose from Top Right, Bottom Right, Bottom Left, Top Left, Top Center, or Center.

- Close the menu changes apply immediately.

You can configure Preset 1 and Preset 2 differently, then switch live with the ** key. This lets you have one clean minimap for exploration and another packed with info for building or PvP.

Method 2: True Custom Position (Drag Anywhere)

- Open full-screen map with J → Settings.

- Set Position to Custom.

- Return to the game world.

- Hold the configured move key (or use arrow keys) to drag the minimap freely.

- Fine-tune with the Minimap Key Move Pixel Offset setting (default 0.001) for precise pixel-level control.

Custom mode gives you pixel-perfect placement anywhere on screen perfect when other mods clutter the corners.

Method 3: In-Game Adjustments and Hotkeys

Some players prefer direct controls:

- Open settings via full-screen map for full access.

- Adjust related options like opacity, shape, info slots, and what displays (waypoints, players, mobs, light level, etc.).

Pro tip: After moving, test in different situations underground caves, dense forests, or with shaders active because render layers can shift slightly.

Comparison: Position Options in JourneyMap (2026)

| Position Option | Best For | Flexibility | Easy to Switch? | Notes |

|---|---|---|---|---|

| Top Right (Default) | Standard clean HUD | Low | Yes | Classic placement, rarely overlaps hotbar |

| Bottom Right | When top is crowded | Low | Yes | Good with action bars on left |

| Bottom Left | Players who read left-to-right | Low | Yes | Common with inventory-focused mods |

| Top Left | Minimal interference | Low | Yes | Avoid if you have chat or notifications |

| Top Center / Center | Dramatic or centered builds | Medium | Yes | Can feel intrusive during combat |

| Custom | Perfect personal HUD | Highest | Moderate | Drag freely + pixel offset tuning |

Custom wins for most experienced players once you spend five minutes setting it up.

Myth vs Fact

Myth: You can only put the minimap in the four corners. Fact: JourneyMap supports Top Center, Center, and full Custom drag mode for anywhere on screen.

Myth: Changing position requires editing config files manually. Fact: Everything is done in-game through the settings menu or hotkeys no file editing needed in recent versions.

Myth: The minimap resets position every time you restart Minecraft. Fact: Settings save per world/profile as long as you close the game properly.

Myth: Custom position only works with certain Minecraft versions. Fact: As of 2026 versions (1.21+), Custom drag and presets work reliably on Fabric, Forge, and NeoForge.

Real-World Insights From Years of Modded Play

After running JourneyMap in hundreds of modpacks across different Minecraft versions from 1.16 through 1.21+, the biggest mistake I see is players fighting the default top-right position instead of using the two presets properly. One preset for a minimal radar during exploration, another fully loaded for base building or resource hunting switching with feels like night and day.

Another common issue: conflicts with shader packs or other HUD mods (like AppleSkin or inventory tweaks). Setting Position to Custom and nudging it a few pixels usually solves overlap instantly. In 2025–2026 testing, the in-game settings menu has become even more responsive, with changes applying without needing a relog.

FAQs

How do I move the JourneyMap minimap to a different corner?

Press J to open the full map, click Settings (or press O), go to Minimap settings, and change the Position dropdown to Bottom Right, Top Left, or any preset option. Changes apply live.

Can I drag the JourneyMap minimap anywhere on screen?

Yes. Set Position to Custom in the settings menu, then use arrow keys or the move control to drag it freely. Adjust the pixel offset for finer control.

How do I switch between two different minimap presets?

The default key is ** (backslash). Configure Preset 1 and Preset 2 separately with different positions, sizes, or displayed info, then switch on the fly.

Why can’t I move my JourneyMap minimap?

Make sure you’re not in a conflicting mod setup (like certain VR mods). Try setting Position to Custom, or check that the minimap isn’t disabled. Restarting the game or updating JourneyMap often fixes stubborn cases.

Does changing minimap position affect performance?

Position changes are purely visual and have zero impact on FPS. Adjust opacity or disable heavy features (like high-quality cave mapping) if you need performance gains instead.

Is there a way to completely hide or disable the minimap?

Yes use Ctrl + J to toggle it off quickly, or turn off “Show Minimap” in the settings for a permanent change.

Conclusion

Changing the minimap position in JourneyMap comes down to understanding presets, the Position dropdown, and Custom drag mode. The core entities minimap presets, position options (corners + custom), hotkeys like J and , and in-game settings menu give you full control over how the mod fits your playstyle.

-

ENTERTAINMENT11 months ago

ENTERTAINMENT11 months agoTesla Trip Planner: Your Ultimate Route and Charging Guide

-

TECHNOLOGY11 months ago

TECHNOLOGY11 months agoFaceTime Alternatives: How to Video Chat on Android

-

BLOG11 months ago

BLOG11 months agoCamel Toe Explained: Fashion Faux Pas or Body Positivity?

-

BUSNIESS11 months ago

BUSNIESS11 months agoCareers with Impact: Jobs at the Australian Services Union

-

BLOG11 months ago

BLOG11 months agoJalalabad India: A Hidden Gem of Punjab’s Heartland

-

FASHION11 months ago

FASHION11 months agoWrist Wonders: Handcrafted Bracelet Boutique

-

BUSNIESS10 months ago

BUSNIESS10 months agoChief Experience Officer: Powerful Driver of Success

-

ENTERTAINMENT11 months ago

ENTERTAINMENT11 months agoCentennial Park Taylor Swift: Where Lyrics and Nashville Dreams Meet